MMP Attribution Improvement: How to Fix Your Mobile Measurement Data and Stop Wasting Ad Spend

With IDFA opt-in rates sitting between 15% and 30%, your mobile measurement partner (MMP) is making more educated guesses than ever. Fraudsters know this. And while your MMP works hard to attribute installs accurately, it was never built to stop bad traffic from entering the funnel in the first place.

This blog explains exactly where MMP attribution breaks down, how to fix it, and why layering click fraud protection on top of your MMP is the most commercially effective move you can make right now.

What Is MMP Attribution and Why Does It Matter?

MMP attribution is the process by which a mobile measurement partner tracks, records and assigns credit for app installs and in-app events to the correct advertising source. Every time a user clicks an ad, downloads your app or completes a purchase, your MMP is logging the data trail that connects that action back to a specific campaign, channel or creative.

Without accurate MMP attribution data, you have no reliable basis for budget allocation, channel performance analysis or ROAS calculation. It is, in the most literal sense, the foundation of performance marketing for mobile apps.

How Mobile Measurement Partners Work: SDK, Attribution Models and Data Flow

Most MMPs operate via an SDK embedded in your app. When a user clicks an ad, the MMP records a timestamped click. When the user installs the app and opens it, the SDK fires and the MMP attempts to match that install to a recorded click using device identifiers, probabilistic matching or SKAN signals.

Attribution models determine which click gets credit:

- Last-click attribution: credit goes to the final ad click before install

- First-click attribution: credit goes to the first touchpoint in the user journey

- Multi-touch attribution: credit is distributed across multiple touchpoints

- Incrementality: measures the true causal lift of an ad campaign

Most MMP attribution in the market still defaults to last-click. This matters a great deal when fraud enters the picture.

Why Your MMP Is Your Single Source of Truth and Why That Is a Problem

Your MMP aggregates attribution data from every channel, network and campaign into a single dashboard. For mobile app marketers, it is the authoritative record of what is driving growth. The problem is that if the data feeding into that record is compromised, every decision downstream is wrong. Budget goes to the wrong channels. Poor performers look like winners. Organic growth gets buried under inflated paid metrics.

An MMP is only as reliable as the traffic it is measuring. If that traffic is contaminated with fraud, your single source of truth becomes a single source of misinformation.

The MMP Attribution Blind Spot: Why Your Data May Be Wrong

The core problem is not that MMPs are poorly built. It is that they were designed to measure traffic, not police it. Fraud prevention is a secondary function in most MMP platforms, and it shows.

How Fraudsters Exploit Last-Click Attribution to Steal Credit

Last-click attribution is the mechanism fraudsters exploit most aggressively. Here is how the main techniques work:

Click injection: Malware on a user's device detects when an app is downloading and fires a fraudulent click at the last moment, hijacking attribution credit from the legitimate source.

Click flooding: Fraudulent networks fire millions of clicks against a user pool, statistically ensuring some will match to an install and claim the reward.

Install hijacking: A fraudulent SDK intercepts a genuine install event mid-flow and reports it to the MMP as its own.

SDK spoofing: Sophisticated bots simulate the full install and engagement sequence, generating fake attribution events without any real device being involved.

In 2025 and 2026, AI-powered fraud bots are making these techniques harder to detect. They mimic organic behaviour patterns, introduce timing variance and rotate device signatures to evade rule-based detection. The old filters are not keeping pace.

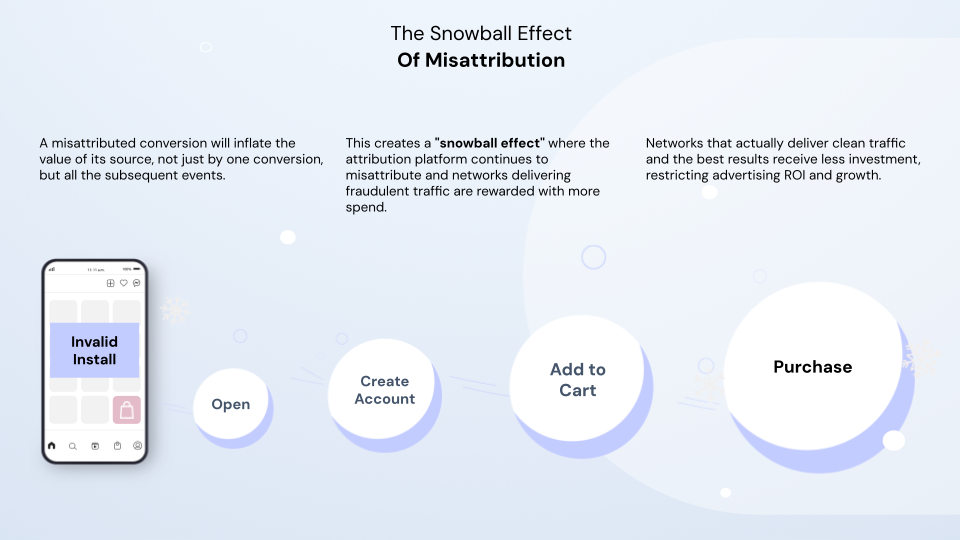

The Misattribution Snowball: How One Fake Install Corrupts Your Entire Channel Mix

This is where the commercial damage compounds. A single fraudulent click that successfully hijacks last-click attribution does more than steal a commission. It inflates that channel's attributed install count, distorts its cost-per-install figure and makes a fraudulent network look like a high performer.

Your campaign data now shows a channel delivering volume. Budget gets reallocated towards it. More fraudulent clicks follow. The snowball grows. Meanwhile, legitimate channels are being starved of budget because their relative performance looks weaker by comparison.

Why MMP Fraud Tools Only Catch Half the Problem

Some MMPs include built-in fraud detection. These tools typically operate at or after the point of attribution, flagging suspicious installs once they have already been recorded. This is post-attribution detection, and it has a fundamental limitation: by the time a fraudulent install is flagged, the click that caused it has already been counted.

Impression-level and click-level fraud, the activity that happens before a user ever reaches your app's install page, goes completely unexamined. This is the top of the funnel, and it is wide open.

How to Improve MMP Attribution Accuracy: A Practical Framework

Improving MMP attribution is not a one-step fix. It requires layered intervention across the full funnel, from the first impression through to post-install engagement. Here is a five-step framework that addresses both the symptoms and the root cause.

Step 1: Layer Pre-Attribution Fraud Prevention on Top of Your MMP

The most impactful change you can make is to intercept fraudulent traffic before it reaches the attribution layer. This means deploying a dedicated fraud prevention solution, such as TrafficGuard for Mobile, that validates traffic at the impression and click level independently of your MMP's own processes.

This approach does not replace your MMP. It verifies it. Your MMP continues to do what it does best. TrafficGuard ensures the data it is measuring is clean.

Step 2: Clean Your Traffic Before It Reaches the MMP

Click-level validation filters out invalid traffic signals in real time. When a click is identified as fraudulent, whether through device signature analysis, timing patterns or behavioural anomalies, it is blocked before it can enter the attribution window. The MMP never sees it. It cannot be hijacked.

This is the critical difference between pre-attribution filtering and post-attribution detection. One prevents the problem. The other discovers it too late.

Step 3: Verify Attribution Independently with a Third-Party Solution

Independent verification means your attribution data is cross-referenced against a source that has no commercial relationship with your ad networks. MMP attribution is inherently susceptible to manipulation because fraudsters understand the attribution rules and engineer their activity to satisfy them.

A third-party layer like TrafficGuard applies its own fraud signals, AI-driven behavioural analysis and device intelligence, producing a verification result that is structurally independent from the attribution output.

Step 4: Monitor Post-Install Events for Behavioural Anomalies

Even with strong pre-attribution filtering, some fraudulent activity will attempt to reach the post-install stage. Monitoring event data for behavioural anomalies, such as users who install but never engage, complete unusual in-app journeys or display non-human timing patterns, is a critical secondary check.

Advanced AI and ML-based analysis identifies these signals far more accurately than rule-based threshold monitoring.

Step 5: Cross-Reference MMP Data with Campaign-Level Performance

Attribution data should never be read in isolation. Channels with high attributed install volumes but poor downstream engagement, low retention or abnormal LTV are worth scrutinising. Fraudulent traffic often passes attribution checks but fails to produce genuine user behaviour.

Systematic cross-referencing of MMP attribution data against real campaign-level KPIs is one of the most reliable ways to surface channels that are gaming the measurement system.

MMP Integration: How TrafficGuard Works Alongside Your Attribution Platform

TrafficGuard is designed to complement, not compete with, your existing MMP. Setup is fast and does not require replacing any part of your current measurement infrastructure.

Seamless Setup: SDK Integration, Measurement URLs and Postback Configuration

Integration typically involves three components: embedding the TrafficGuard SDK or configuring measurement URLs within your existing MMP setup, establishing postback configuration to pass verification signals back to your attribution platform, and enabling real-time reporting within the TrafficGuard dashboard.

For most app developers, initial integration can be completed within a single sprint. Full documentation and support is available in the TrafficGuard Help Centre.

Compatible with AppsFlyer, Adjust, Branch, Singular, Kochava and More

TrafficGuard integrates directly with all major MMPs, including AppsFlyer, Adjust, Branch, Singular and Kochava. If you are already running attribution through any of these platforms, you can layer TrafficGuard on top without disrupting your existing setup or data flows.

Real-Time Click Validation vs Post-Attribution Detection: What Is the Difference?

Post-attribution detection identifies fraud after it has already been attributed. The damage, in terms of misallocated budget and corrupted data, has already occurred. Real-time click validation intercepts fraudulent activity before attribution happens, preserving data integrity from the point of entry.

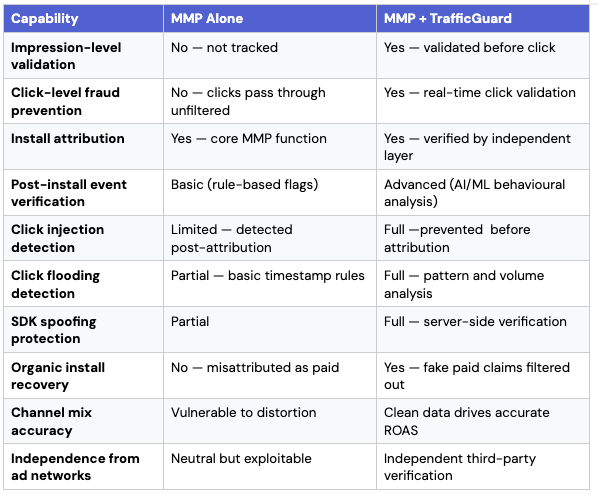

The table below illustrates the full capability difference:

The Benefits of Cleaner Attribution Data for App Marketers

Clean MMP attribution data is not just a technical improvement. It is a commercial one. Here is where the impact is most measurable.

More Accurate ROAS and Channel Mix Decisions

When fraudulent traffic is removed from your attribution model, every channel's reported ROAS reflects genuine user acquisition. High-performing channels are correctly identified and rewarded with budget. Low-performing or fraudulent channels no longer masquerade as strong performers.

The result is a channel mix built on evidence, not manipulation. Budget efficiency improves immediately.

Higher-Quality User Acquisition at Lower CPA

Fraudulent clicks consume acquisition budget without delivering users. Remove them, and the same budget reaches a larger pool of genuine prospects. User acquisition quality improves. CPA drops. The economics of your campaigns shift without changing a single targeting parameter.

Recovering Organic Installs Misattributed to Paid Channels

One of the least-discussed consequences of MMP fraud is organic install suppression. When fraudulent click injectors claim credit for installs that were driven by organic discovery or word-of-mouth, those installs are recorded as paid. Organic channel performance is understated. Paid channel performance is overstated.

TrafficGuard's pre-attribution filtering prevents fraudulent credit claims, restoring organic installs to their correct source. For many app marketers, this changes the picture on channel ROI significantly.

Case Study: How a Mobile Gaming Developer Saved $70K Per Month by Fixing Misattribution

A mobile gaming developer using a leading MMP was seeing strong install volumes from several performance networks. Post-install engagement told a different story. Retention rates were low, in-app purchase rates were below benchmark and ROAS was declining despite increasing spend.

After deploying TrafficGuard alongside their existing MMP, click-level analysis revealed that a significant portion of attributed installs were the result of click injection and click flooding. Fraudulent networks were systematically claiming credit for organic and cross-channel installs.

Once fraudulent traffic was filtered at the click level, the developer redirected budget to verified performing channels. The result was a $70,000 per month reduction in wasted ad spend, alongside measurable improvements in user quality and post-install LTV.

Read the case study here.

The Future of MMP Attribution: What Is Changing in 2025 and 2026

The attribution landscape is undergoing its most significant structural shift since the introduction of the MMP model itself. Understanding what is changing is essential context for anyone making measurement decisions today.

Post-ATT Reality: SKAN, Privacy Sandbox and the Decline of Deterministic Tracking

Since Apple's App Tracking Transparency framework reduced IDFA availability to single-digit and low double-digit opt-in rates, MMPs have been operating in a far noisier signal environment. SKAdNetwork data is aggregated, delayed and capped by privacy thresholds. Google's Privacy Sandbox is introducing similar constraints on Android.

As deterministic tracking becomes the exception rather than the rule, probabilistic attribution and modelled data fill the gaps. Fraudsters have adapted accordingly, targeting the edges of probabilistic models where confident attribution is hardest to challenge.

From Last-Click to Multi-Touch and Incrementality: The Attribution Model Shift

The industry is slowly moving away from last-click attribution towards multi-touch attribution (MTA) and incrementality measurement. MTA distributes credit across the full user journey. Incrementality testing asks a more fundamental question: would this user have installed without the ad?

Both approaches reduce, but do not eliminate, vulnerability to fraud. Click injection and click flooding are specifically engineered for last-click environments. As models shift, fraud techniques will evolve to target whatever mechanism is doing the attributing.

Why Fraud Prevention Becomes More Critical as Attribution Gets Murkier

The less deterministic attribution becomes, the more valuable clean traffic signals are. When your MMP is modelling attribution rather than measuring it directly, the quality of the underlying click and impression data has an outsized effect on output accuracy.

If fraudulent clicks are in the model's input, the model's output is compromised. Pre-attribution fraud prevention is not just a defensive measure in this environment. It is the prerequisite for attribution accuracy. For a deeper look at why built-in MMP fraud tools fall short, see our post on why your MMP's ad fraud protection is not enough.

Stop Guessing, Start Verifying: Improve Your MMP Attribution Today

Your MMP is doing its job. The question is whether the data it is working with is reliable enough to make your campaign decisions on.

TrafficGuard layers real-time click fraud protection across your existing MMP setup, validating traffic before it reaches attribution, recovering misattributed organic installs and giving you a verified data set that your channel mix decisions can actually be built on.

- Pre-attribution click and impression validation

- Independent verification alongside your existing MMP

- AI/ML behavioural analysis for post-install anomaly detection

- Seamless integration with AppsFlyer, Adjust, Branch, Singular and Kochava

- Real-time reporting and transparent fraud signals

Check out our Click Fraud Calculator for Invalid Traffic to estimate how much invalid traffic is currently costing you.

FAQs & Key Takeaways

1. Can TrafficGuard work with my existing MMP contract, or do I need to change platforms?

TrafficGuard integrates alongside your existing MMP without requiring any contract changes, platform switches or re-attribution of historical data. It operates as an independent verification layer on top of your current setup.

2. How does click injection fraud actually work, and why is it so hard to detect?

Click injection exploits the window between when a user initiates an app download and when the install completes. Malware on the device fires a fraudulent click at that precise moment, claiming last-click attribution credit. It is difficult to detect post-attribution because the timing looks plausible. Pre-attribution click validation, which examines device signals and click-to-install timing patterns, is the most effective countermeasure.

3. My MMP already has fraud detection built in. Why is that not sufficient?

Built-in MMP fraud tools operate at or after attribution, not before. They can flag suspicious installs retrospectively but cannot prevent fraudulent clicks from entering the attribution window in the first place. Pre-attribution filtering intercepts fraud before it becomes an attribution event, which is the only way to protect data integrity at the source.

4. How does SKAdNetwork (SKAN) affect my MMP attribution accuracy in 2025?

SKAN reporting is aggregated, delayed by up to 72 hours, and privacy-thresholded, meaning campaigns below minimum volume thresholds return no data at all. This creates significant blind spots in MMP reporting for iOS campaigns. Fraud activity that targets SKAN-tracked campaigns is harder to isolate post-attribution, making pre-attribution click validation more important, not less.

5. What is the difference between multi-touch attribution and incrementality testing?

Multi-touch attribution distributes credit for a conversion across multiple ad touchpoints in the user journey, rather than giving it all to the last click. Incrementality testing measures whether a user would have converted without the ad at all, using holdout groups. Both approaches reduce over-crediting of individual channels, but neither eliminates fraud risk. Fraudsters adapt to attribution model changes.

6. How long does it take to integrate TrafficGuard with an existing MMP setup?

Most integrations are completed within a single development sprint. The process involves SDK integration or measurement URL configuration, postback setup and dashboard access. TrafficGuard supports all major MMPs including AppsFlyer, Adjust, Branch, Singular and Kochava, with detailed documentation available in the Help Centre.

7. What happens to organic installs that have been misattributed to paid channels?

Once fraudulent click-injection and click-flooding activity is filtered out at the pre-attribution stage, the installs those clicks were falsely claiming credit for are no longer attributed to the fraudulent source. Depending on your MMP's attribution logic, they are either attributed to the correct upstream touchpoint or recorded as organic. Either way, your organic performance metrics become more accurate and paid channel ROAS figures reflect genuine delivery.

8. Is ad fraud in mobile apps getting worse in 2025 and 2026?

The volume and sophistication of mobile ad fraud is increasing. AI-powered bots now simulate realistic in-app behaviour, making post-install detection harder. The decline of deterministic tracking reduces signal clarity for MMPs. And as more ad spend moves to performance-based mobile channels, the financial incentive for fraudsters grows. Fraud prevention is not a solved problem. It requires continuous, real-time intervention.

Get started - it's free

You can set up a TrafficGuard account in minutes, so we’ll be protecting your campaigns before you can say ‘sky-high ROI’.

Subscribe

Subscribe now to get all the latest news and insights on digital advertising, machine learning and ad fraud.